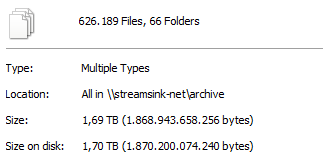

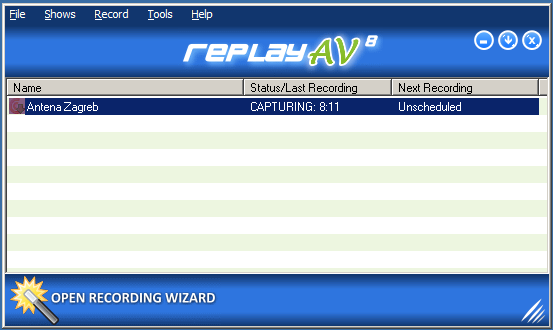

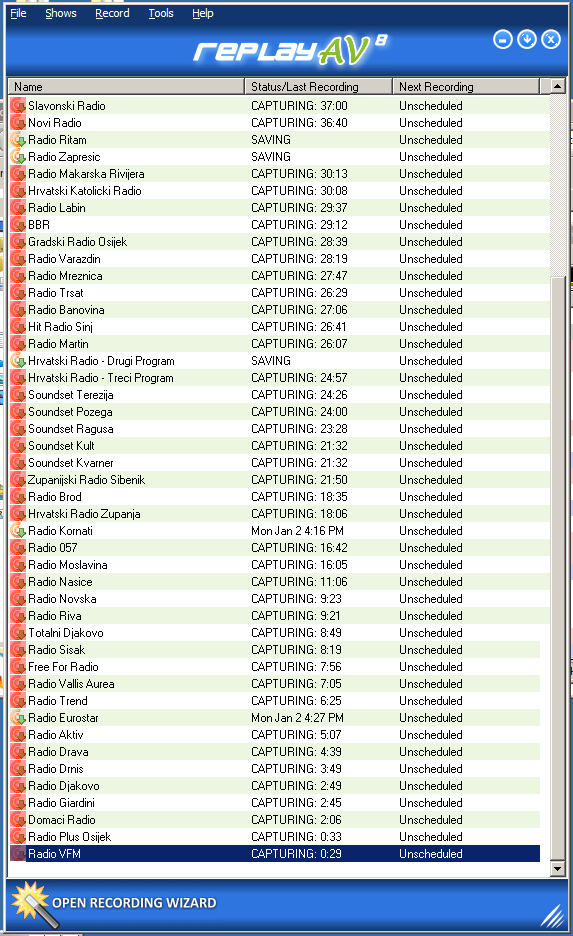

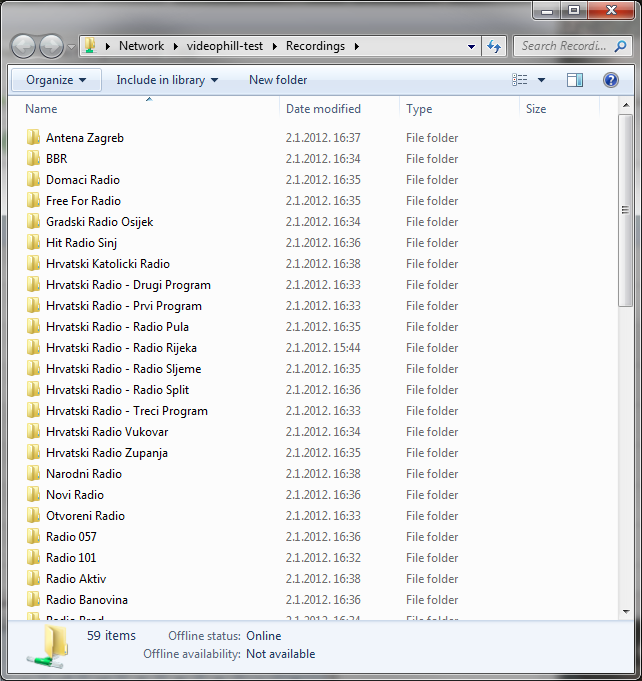

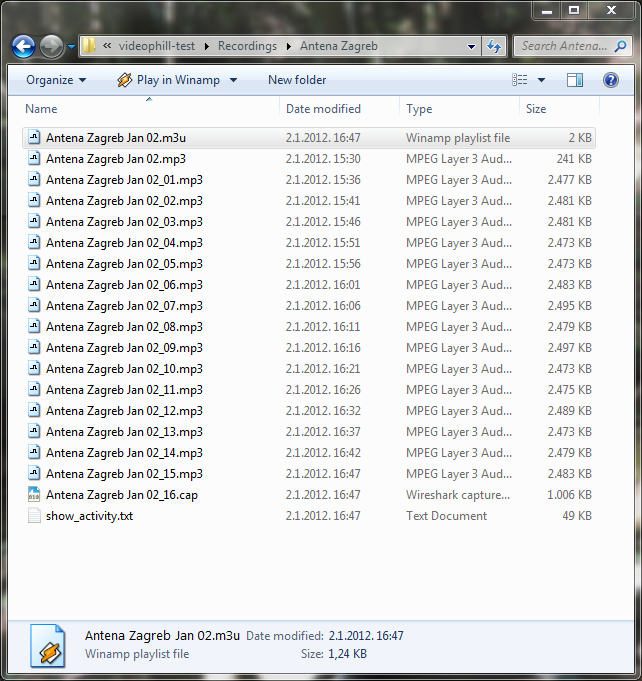

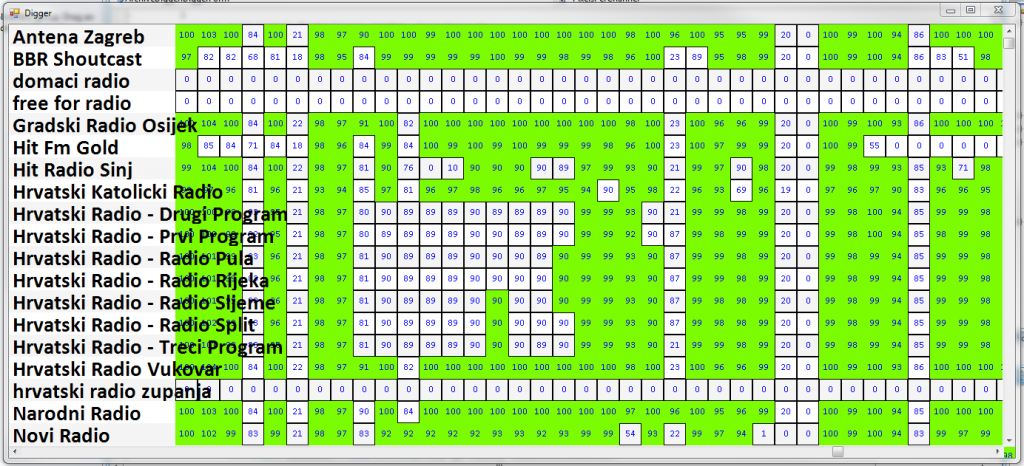

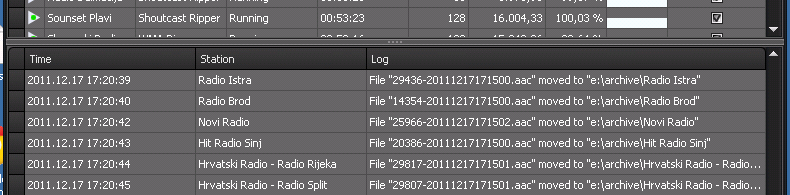

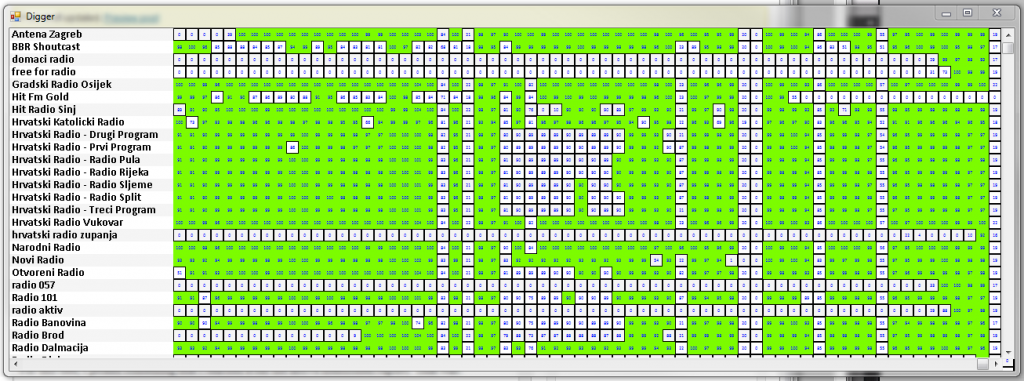

When you are in media monitoring, you have TONS of files. nike homme For example, look at this:

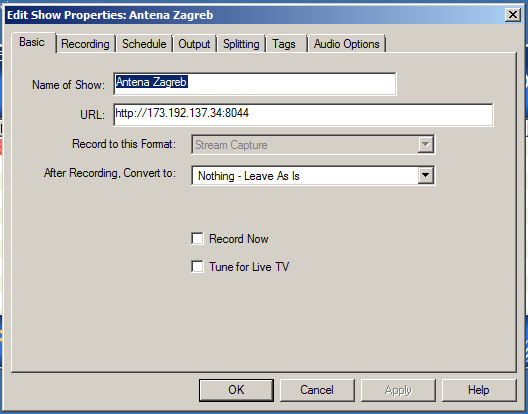

Every recorder and logger and input produces a number of files on your system. Of course, each application such as VideoPhill Recorder or StreamSink have the option for deleting a files after they expire (one month for example), but what if you have other way of gathering information (media, metadata, something) that won’t go away by itself? I have several such data sources, so I opted to create a MultiPurposeHighlyVersatile FileDeleter Application. sac fjallraven kanken pas cher I’ll probably find a better name later, for now lets call it ‘deleter’ for short.

The Beginning

The application to delete files must be such a triviality, I can surely open Visual Studio and start to code immediately. Asics Gel Lyte 3 Well, not really. Adidas Dames In my head, that app will do every kind of deleting, so let’s not get hasty, and let’s do it by the numbers.

First, a short paragraph of text that will describe the vision, the problem that we try to solve with the app, in few simple words. That is the root of our development, and we’ll revisit it several times during the course of the development.

Vision:

‘Deleter’ should able to free the hard drive of staled files (files older than some period) and keep the level of hard drive space at some pre-determined minimum.Here, it’s simple enough that I can remember it, and I’ll be able to descend down from it and create the next step.

The Next Step

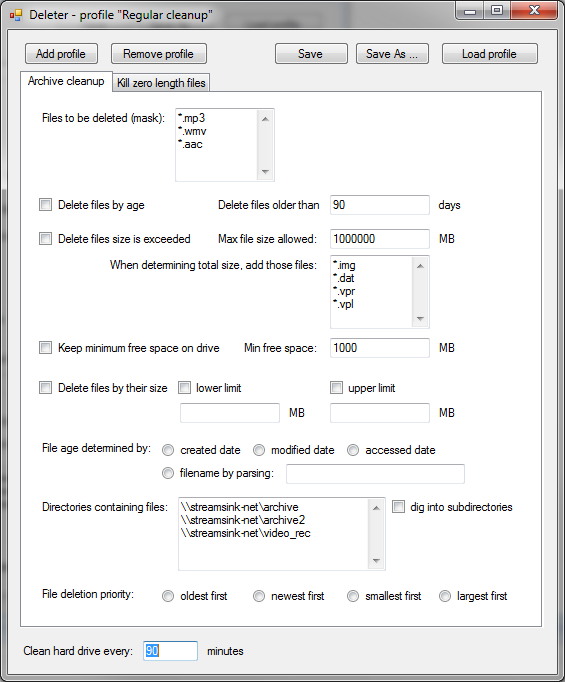

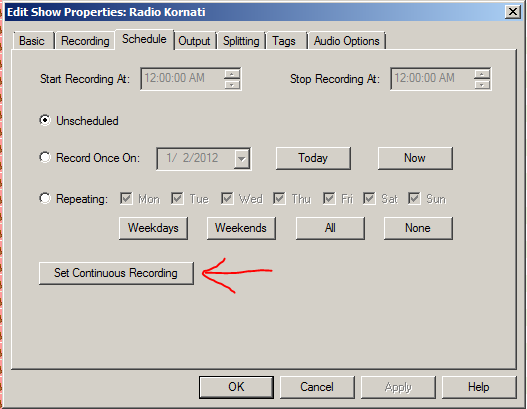

For me, the next step (let’s say in this particular case) would be to try and see what ‘features’ does the app have. Nike Pas Cher The only way it works for me is to create a mock of the application UI and write down the things that aren’t visible from the UI itself. Comprar Nike Air Max Since this UI won’t do anything but gather some kind of parameters that will define behavior of the app, it will be a simple one, and it will be possible to fit it nicely on one screen.

For the sketch I’ll use Visual Studio, because I’m most comfortable with it. If it wasn’t my everyday tool, I’ll probably use some application such as MockupScreens, which is completely trivialized app sketching gadget with powerful analyst features.

The process of defining the UI and writing down requirements took some time, I repeatedly added something to UI, then to the list below, until I had much clearer picture what I’m actually trying to do.

Features:

- it should have ability to delete only certain files (defined by ‘mask’ such as *.mp3)

- it should be able to delete file by their age

- it should be flexible in determining the AGE of the file:

- various dates in file properties: created, modified, accessed

- by parsing the file name of the file

- it should be able to delete from a multiple directories

- it should be able to either scan directory as a flat or dig into subdirectories

- it should be able to delete files by criteria other than age

- files should be deleted if their total size exceeds some defined size

- in that case, other files should be taken into account, again by mask

- files should be deleted if minimum free drive space is less then some defined size

- file size

- when deleting by criteria other than file age, specify which files should be first to go

- should be able to support multiple parameter sets at one time

- should run periodically in predetermined intervals

- should be able to load and save profiles

- profiles should have names

- should disappear to tray when minimized

- should have lowest possible process priority

As you look at the UI mock, you’ll see some mnemonic tricks that I use to display various options, for example:

- I filled the textboxes to provide even more context to the developer (myself with another cap, in this case)

- I added vertical scrollbars even if text in multi-line textboxes isn’t overflowing, to suggest that there might be more entries

- for multiple choice options I deliberately didn’t use combobox (pull down menu) – I used radio button to again provide visual clues to various options without need for interaction with the mock

From Here…

I’ll let it rest for now, and tomorrow I’ll try to see if I can further nail down the requirements for the app. From there, when I get a good feeling that this is something I’m comfortable with, I’ll create a object interface that will contain all the options from the screen above. While doing that, I’ll probably update requirements and the UI itself, maybe even revisit The Mighty Vision above.

BTW, it took me about 2 hours to do both the article and the work.