Since I’m about to create a media monitoring offer for my first end-user client of such kind, and as this caught me totally unprepared, I’m on a journey of discovery for prices that would be fair to them but adequate to the company.

Media monitoring here is in a very limited context – they only need advertisement verification service, and that is the only service I can provide at the moment anyway.

For this estimation, I’ll try to use their side of view, and try to provide some added value. Fjallraven Kanken Big At the end of this post, I’ll summarize the thoughts presented within.

The case

The prospect is a retail store company that has stores all through the country, and they are advertising on all media globally, and of course they use radio.

For every radio advertisement they have to pay some amount, defined by the stations’ price list, subject to various discounts, and so on. cheap nike trainers uk For the payment they always get the invoice, and for most radio stations they also get ‘proof-of-playback’ document.

Proof-of-playback is usually generated from the automation software playout logs and processed with system such as SpotKontrol. They are accurate most of the time, but sometimes, there are some discrepancies due to operator error or some other intricacy that’s going on.

Everything in the process is being done in a good will, but sometimes advertisements aren’t played and they are shown in the proof-of-playback document, and sometimes it’s the other way around. Each playback is charged for some amount, and if the proof is incorrect, one party or the other is losing money. It isn’t great situation for both of them.

So the idea would be to provide a service that could verify the document that is provided by the media by obtaining real and referent information on the playback of the advertisements.

Calculation

Some math and the abstract thinking would be required to read this section. If you don’t mind reading it, just skip to the end where the results are shown.

In my estimation process I’ll always try to bound the numbers so they will show one extreme side of the possible cost range, and by doing so will come up with a cost that is always AT LEAST that amount. mochilas kanken baratas For example, if there are 10 radio stations in question, and they have various cost of advertisement per second, I’ll use smallest number of them.

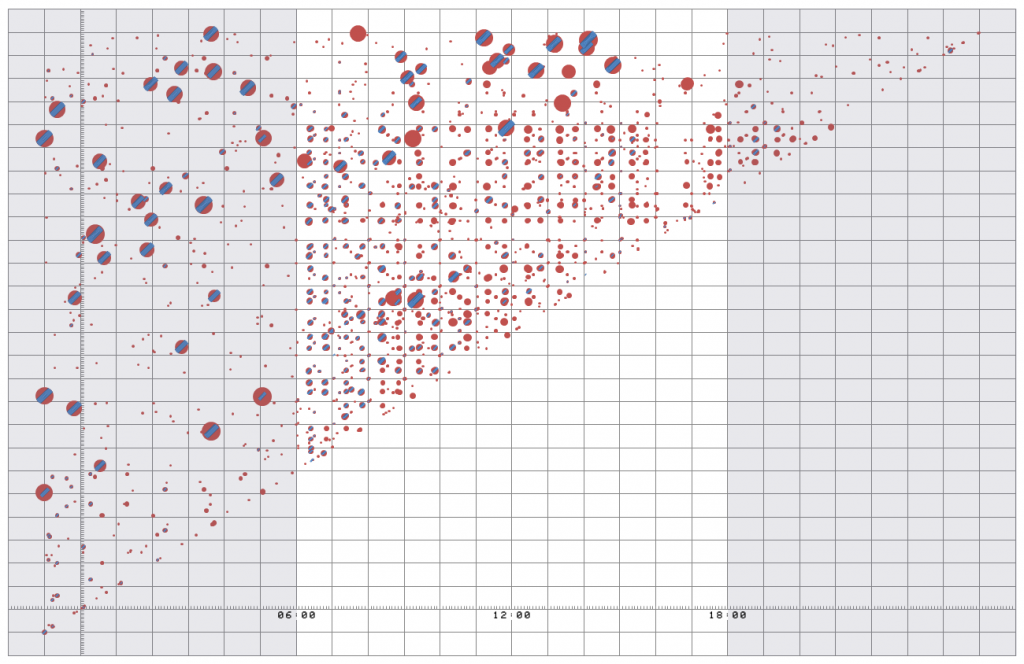

First estimation is that such a retail store will have AT LEAST 2 advertisements a day on AT LEAST 10 global radio channels. nike air max 1 pas cher We will also say that we will advertise only at workdays, so that gives us AT LEAST 20 days per month, giving us 2 * 10 * 20 = 400 advertisement playbacks. That is the lowest bound, try to remember that, and we used only 10 global radio stations – most advertisers will go into local advertising as well.

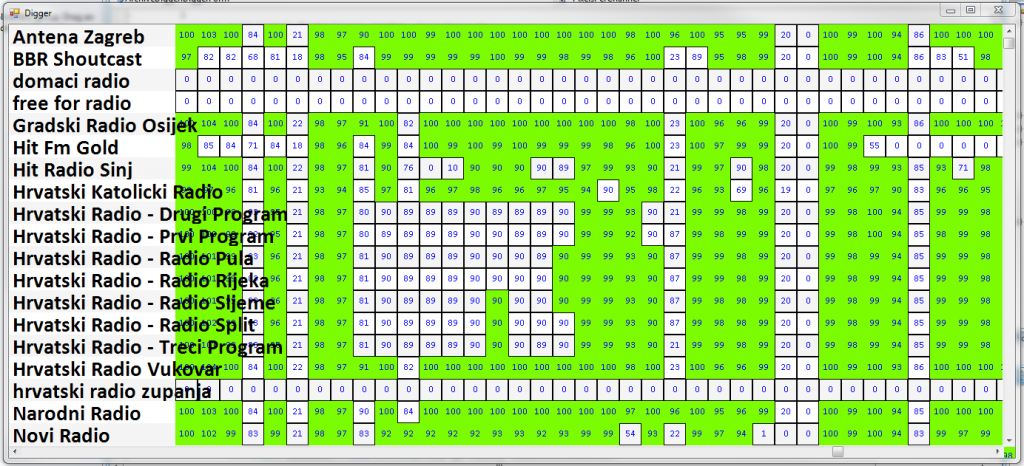

Now, let’s try to estimate how much will each advertisement playback cost. For that, we’ll use 5 prominnent radio stations and see their price lists. We will also say that the advertisement in question will be AT LEAST 30″ in duration. nike air max Radio stations:

The price for 30″ advertisement playback for those stations are: 360, 660, 110, 520, 400. Fjallraven Kanken Big I would recommend Radio Istra to lift their prices of advertising up, and because of them I’ll go with next lowest price to be our estimate here: 360 kn.

From before, we had 400 advertisement playbacks per month, at a rate of 360 kn that amounts to 144.000 kn. Since we promised we’ll use LOWEST bound, and some would be able to argue that there are various discounts that companies such as this can obtain, let’s say that the maximum amount of discount is 50%, and that will bring our cost down to half, and that is: 72.000 kn spent on advertising, each month. In reality it is really a different number, but let’s go with this estimate here.

Now we know what are we insuring. Let’s try to see what would be the cost of manually protecting that investment.

Let’s suppose that we have in place:

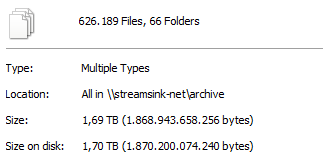

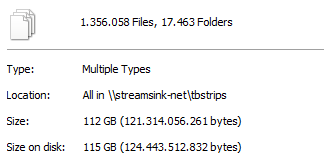

- equipment to record and store 10 radio stations worth of broadcasting material (StreamSink for example)

- means of reviewing (audibly) the archive of the broadcast material

- a person that is trained to do all that.

I have the information that such person would cost about $12 in USA and about $4 to $6 in the cheap-labor countries. Let’s say that our guy will cost $8 = about 50 kn per hour.

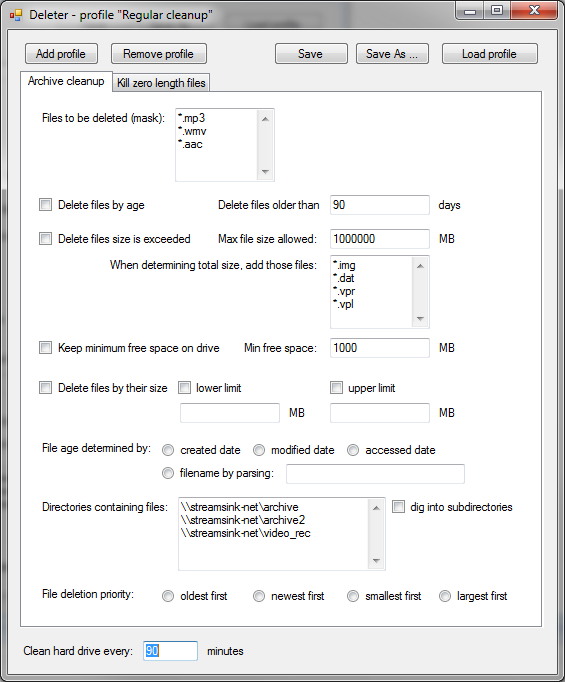

From my experience, and by using VideoPhill Player to access the archive, confirming 20 advertisements playback would last about one hour if we do have a proof-of-playback document, and at least 2 hours if we don’t, since whole block of advertisements would have to be under scrutiny. Also, here we assume that our operator is HIGHLY familiar with scheduling practices of each radio station, and won’t stray too much while searching for the advertisement blocks.

So with all the equipment, and trained staff, it seems that cost of verification for that kind of volume is from 1000 kn to 2000 kn per month. If we use average number here, and see what’s the ratio of the analyst cost per investment that he protects, we come up with 1:48 or 2%.

Result: we can say that cost of verification for advertisements is about 2% of the cost of the advertising.

It would be even higher (in percentage) if we were considering other media that has lower cost of advertising, since operator would have to scan (at same cost) the material that is paid less.

Conclusion

Since I’m not here to promote the service that isn’t needed by someone, I’ll only try to provide fair price for it for someone that recognizes the need for it. To do that, I’ll go with 50% discount on my already low estimation, and will try to see if the company can sustain that service at that fee.

That being said, the conclusion is that

to provide a list of played commercials we’ll charge 1% of estimated monthly advertisement cost for that channel(s).

We’ll start from there, and see where it takes us.